How many full flags (and hence how many maximal unipotent subgroups, aka \(p\)-Sylows, are there) in \(\mathbb{F}_q^n\)? I claim that there are $$(1+q)(1+q+q^2)\cdots (1+q+q^2+\cdots +q^{n-1})=\prod_{i=1}^{n} \frac{1-q^i}{1-q}.$$ Let’s prove this by induction.

The base case where \(n=1\) is trivial — there’s a unique full flag. Now let \(V\) be an \(n\)-dimensional vector space over \(\mathbb{F}_q\). Each codimension \(1\) subspace contains $$\prod_{i=1}^{n-1} \frac{1-q^i}{1-q}$$ full flags by the induction hypothesis, so it’s enough to show that there are $$\frac{1-q^n}{1-q}$$ codimension \(1\) subspaces. Such a subspace is the vanishing locus of a non-zero linear functional, well-defined up to scaling; there are \(q^n-1\) non-zero linear functionals and modding out by scaling by \(\mathbb{F}_q^*\) gives $$\frac{1-q^n}{1-q}$$ as desired.

Let’s check Sylow’s third theorem: $$(1+q)(1+q+q^2)\cdots (1+q+q^2+\cdots +q^{n-1})$$ is equal to 1 mod \(p\), as desired.

A geometric analogue

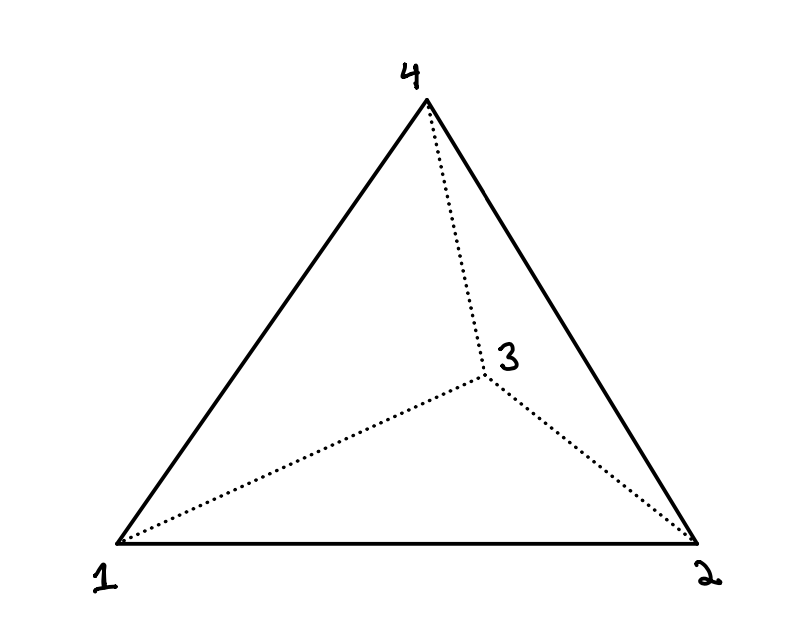

My student Sasha Shmakov made the following observation to me a few months ago: for \(k\) any field, maximal unipotent subgroups of \(GL_n(k)\) exist and are all conjugate to one another; this is some kind of algebro-geometric analogue of the first two Sylow theorems, at least for \(p\)-Sylows of \(GL_n(\mathbb{F}_q)\). To see this, note that full flags exist inside of \(k^n\), and \(GL_n(k)\) acts transitively on them.

He asked: what’s the analogue of the third Sylow theorem? Amazingly, it turns out there is one.

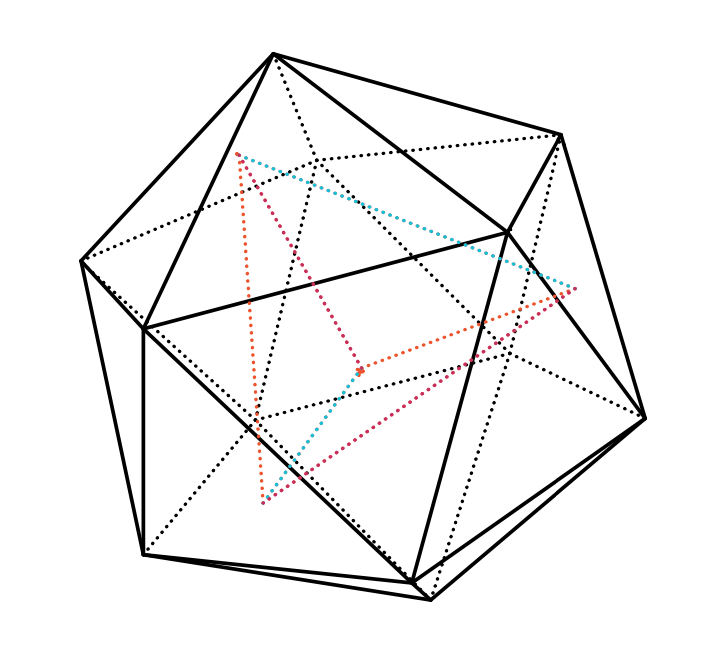

Instead of counting maximal unipotent subgroups (which we can’t do — if \(k\) is infinite, there are infinitely many of them!) we’ll study the parameter space of maximal unipotent subgroups, or equivalently full flags. This is called the full flag variety \(\text{Fl}_{1, 2, \cdots, n}\).

Let’s write \(G=GL_n\) and let \(B\subset G\) be the stabilizer of a full flag; this is the normalizer of a maximal unipotent subgroup, and is often called a Borel subgroup. The quotient $$\text{Fl}_{1, 2, \cdots, n}:=G/B$$ is (more or less by the orbit stabilizer theorem) the space of full flags; it’s not obvious but it follows from general theory that this quotient naturally has the structure of an algebraic variety.

Let’s work out the geometry of these varieties.

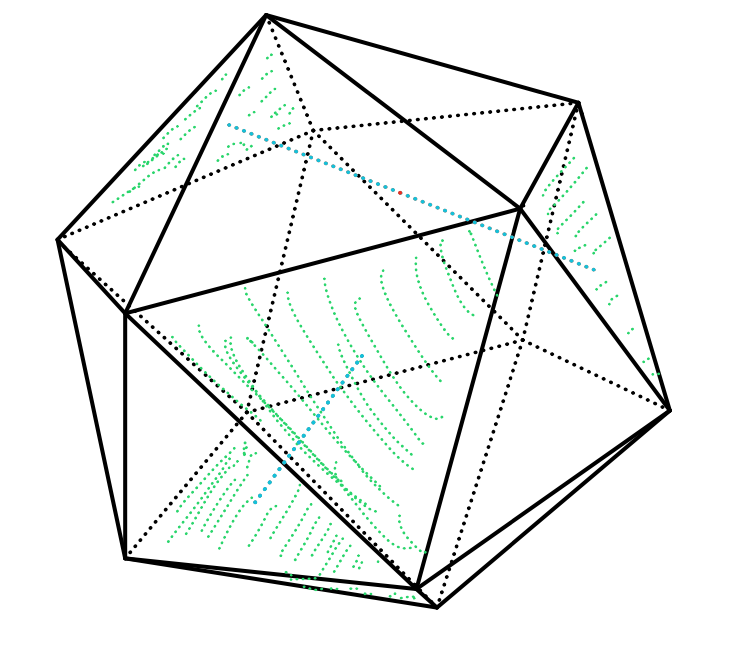

The argument proceeds analogously to the situation over finite fields. Let \(G(n-1, n)\) be the space of codimension 1 subspaces of \(V\). There is a map $$\text{Fl}_{1, 2, \cdots, n}\to G(n-1, n)$$ given by forgetting all but the last subspace of our flag. The fibers of this map over a given codimension 1 subspace \(V’\subset V\) are precisely the full flags on \(V’\), so let’s work out the geometry of \(G(n-1,n)\); this will suffice by induction. But \(G(n-1, n)\) is the space of codimension 1 subspaces of an \(n\)-dimensional vector space, or equivalently the space of lines in \(V^\vee\), so it’s isomorphic to the projective space \(\mathbb{P}^n\).

We can suggestively write $$\mathbb{P}^n=\frac{\mathbb{A}^{n+1}-\text{pt}}{\mathbb{G}_m},$$ where $$\mathbb{G}_m=\mathbb{A}^1- \text{pt}$$ is the multiplicative group of \(k\). This should perhaps remind you of the terms $$\frac{1-q^i}{1-q}$$ appearing in the finite field setting. And indeed, an analogous argument to that case shows that $$\mathbb{P}^n=\mathbb{A}^n\cup \mathbb{A}^{n-1}\cup \cdots\cup \text{pt},$$ that is, the geometric series formula from before makes sense geometrically.

For the experts: we’ve shown that the map $$\text{Fl}_{1, 2, \cdots, n}\to G(n-1, n)$$ has fibers isomorphic to flag varieties, but it’s perhaps not obvious that this bundle is Zariski-locally trivial. There are a number of ways to see this but I think the easiest is to observe that this follows from (Grothendieck’s form of) Hilbert’s Theorem 90.

Formulating the third Sylow theorem

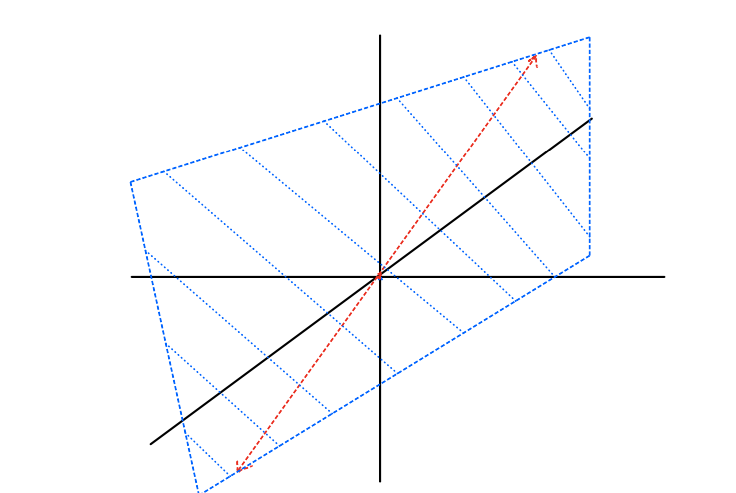

We’re now ready to make sense of a geometric formulation of the third Sylow theorem. We’re going to work in the Grothendieck Ring of Varieties over \(k\), denoted \(K_0(\text{Var}_k)\). This is the free Abelian group on isomorphism classes of varieties \([X]\) over \(k\), modulo the relation that $$[X]=[Y]+[X\setminus Y]$$ for \(Y\subset X\) a closed subvariety. Mutliplication just comes from the Cartesian product: $$[X]\cdot [Y]=[X\times Y].$$ This ring has a distinguished element $$\mathbb{L}:=[\mathbb{A}^1],$$ the class of the affine line.

So for example, from what we observed above, we have $$[\mathbb{P}^n]=\mathbb{L}^n+\mathbb{L}^{n-1}+\cdots+1.$$ And indeed, the argument from above (plus the Zariski-local triviality of the fiber bundle $$\text{Fl}_{1, 2, \cdots, n}\to G(n-1, n)$$ discussed in the last paragraph of the previous section), we can write $$[\text{Fl}_{1, 2, \cdots, n}] = \prod_{i=1}^n (1+\mathbb{L}+\cdots +\mathbb{L}^{i-1})$$ in \(K_0(\text{Var}_k)\), exactly in analogy to the situation over finite fields.

So here is an analogue of the third Sylow theorem, which we’ve just proven:

Theorem. We have $$[\text{Fl}_{1, 2, \cdots, n}]= 1 \bmod (\mathbb{L})$$ in \(K_0(\text{Var}_k)\).

That is, the space of maximal unipotent subgroups in \(GL_n\) — which we can perhaps think of as \(\mathbb{L}\)-Sylow subgroups — is congruent to 1 modulo \(\mathbb{L}\). In fact — for the experts — the analogous statement holds for split reductive groups over any field.

In a future post I’ll discuss analogies for prime-to-\(p\) Sylows, and what happens for non-split groups, where things get substantially more complicated!